US Companies Combat AI Bias: A Deep Dive into Digital Ethics by Q4 2026

The rapid proliferation of Artificial Intelligence (AI) across virtually every sector of the US economy has brought with it unprecedented opportunities for innovation, efficiency, and growth. From personalized recommendations and predictive analytics to autonomous vehicles and medical diagnostics, AI is reshaping how we live, work, and interact. However, this transformative power is not without its perils. A growing concern, both within the tech industry and among the general public, is the pervasive issue of bias embedded within AI algorithms. This bias, often stemming from flawed data, design choices, or societal prejudices, can lead to discriminatory outcomes, perpetuate inequalities, and erode trust in these powerful systems. Recognizing the profound ethical, legal, and reputational risks, US companies are increasingly prioritizing the development and implementation of robust digital ethics frameworks to combat AI bias. This article delves into the proactive measures and strategies being adopted by US companies, examining their progress and setting expectations for significant advancements by the fourth quarter of 2026, focusing heavily on AI Bias Mitigation US efforts.

The urgency to address AI bias is not merely a matter of corporate social responsibility; it is becoming a strategic imperative. Regulatory bodies are beginning to scrutinize AI systems more closely, and consumers are becoming more aware and vocal about the ethical implications of AI. Companies that fail to address AI bias risk facing hefty fines, legal challenges, public backlash, and a significant loss of market trust. Therefore, understanding and actively mitigating AI bias is paramount for sustained success and ethical leadership in the digital age. This comprehensive exploration will shed light on the multifaceted approaches US companies are employing to ensure their AI systems are fair, transparent, and accountable, thereby reinforcing the critical importance of AI Bias Mitigation US initiatives.

The Pervasiveness of AI Bias: Understanding the Landscape

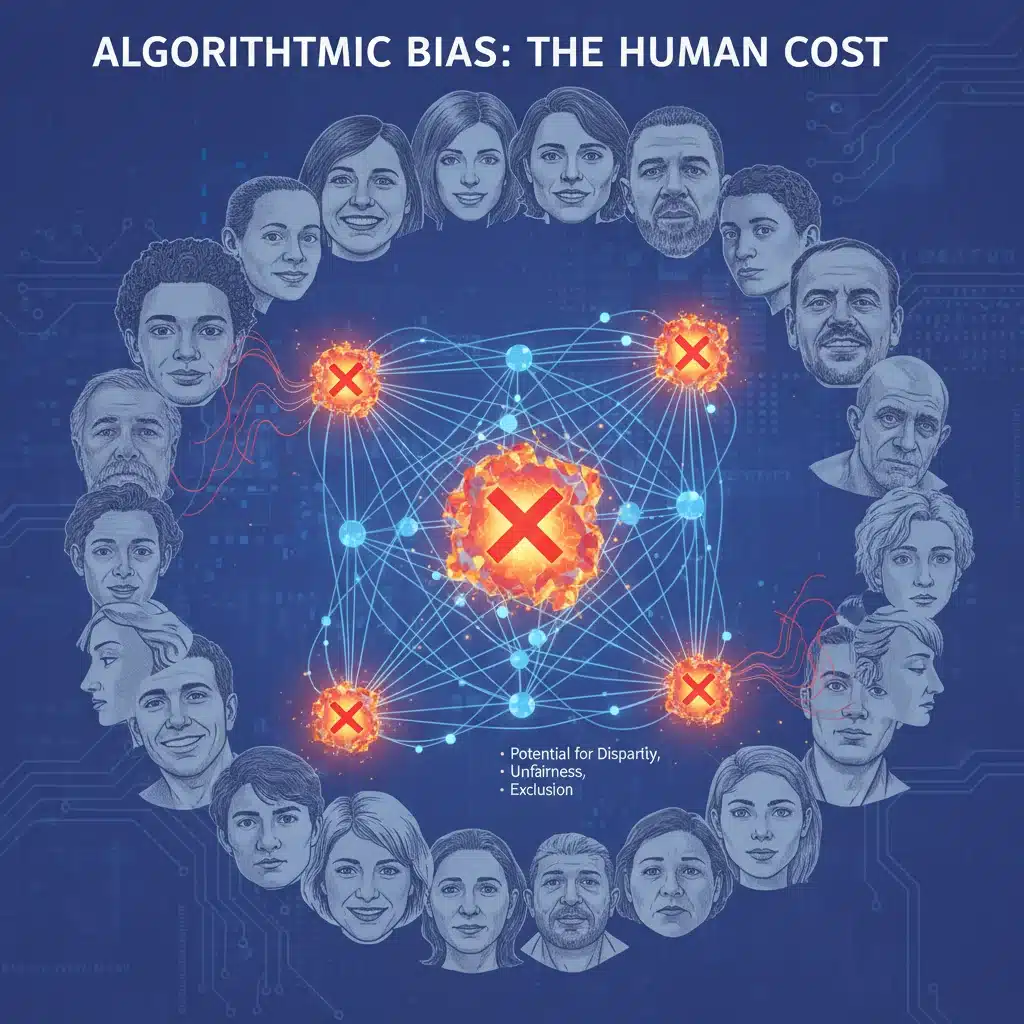

Before delving into solutions, it’s crucial to grasp the various forms and sources of AI bias. Bias in AI is not a monolithic concept; it manifests in several ways, each requiring distinct mitigation strategies. The most common types include:

- Data Bias: This is arguably the most prevalent source. If the data used to train an AI model is unrepresentative, incomplete, or reflects existing societal prejudices, the model will inevitably learn and amplify those biases. Examples include historical data that underrepresents certain demographics, or data collected with inherent human biases.

- Algorithmic Bias: Even with relatively unbiased data, the design of the algorithm itself can introduce bias. This can stem from the choice of features, the mathematical models used, or the optimization objectives that inadvertently favor certain outcomes over others.

- Interaction Bias: This occurs when AI systems learn from real-world interactions that are themselves biased. For instance, a chatbot learning from biased user conversations could start reflecting those biases.

- Systemic Bias: This refers to biases that are deeply ingrained within the societal structures and institutions, which AI systems can then reflect and exacerbate if not carefully designed and monitored.

The consequences of these biases can be severe and far-reaching. In hiring algorithms, biased AI can disproportionately reject qualified candidates from underrepresented groups. In facial recognition technology, inaccuracies can be significantly higher for women and people of color, leading to false arrests or misidentifications. In credit scoring, biased models can deny loans to deserving individuals based on irrelevant demographic factors. In healthcare, diagnostic AI could misdiagnose or undertreat certain patient populations. These examples underscore why effective AI Bias Mitigation US strategies are not just ethical but also essential for equitable societal functioning.

The complexity of identifying and quantifying bias further compounds the challenge. AI models, particularly deep learning networks, are often described as ‘black boxes,’ making it difficult to understand how they arrive at their decisions. This lack of transparency makes it harder to pinpoint the source of bias and develop targeted interventions. As US companies navigate this intricate landscape, they are investing heavily in research and development to create tools and methodologies that can effectively detect, measure, and ultimately reduce AI bias.

Strategic Approaches to AI Bias Mitigation by US Companies

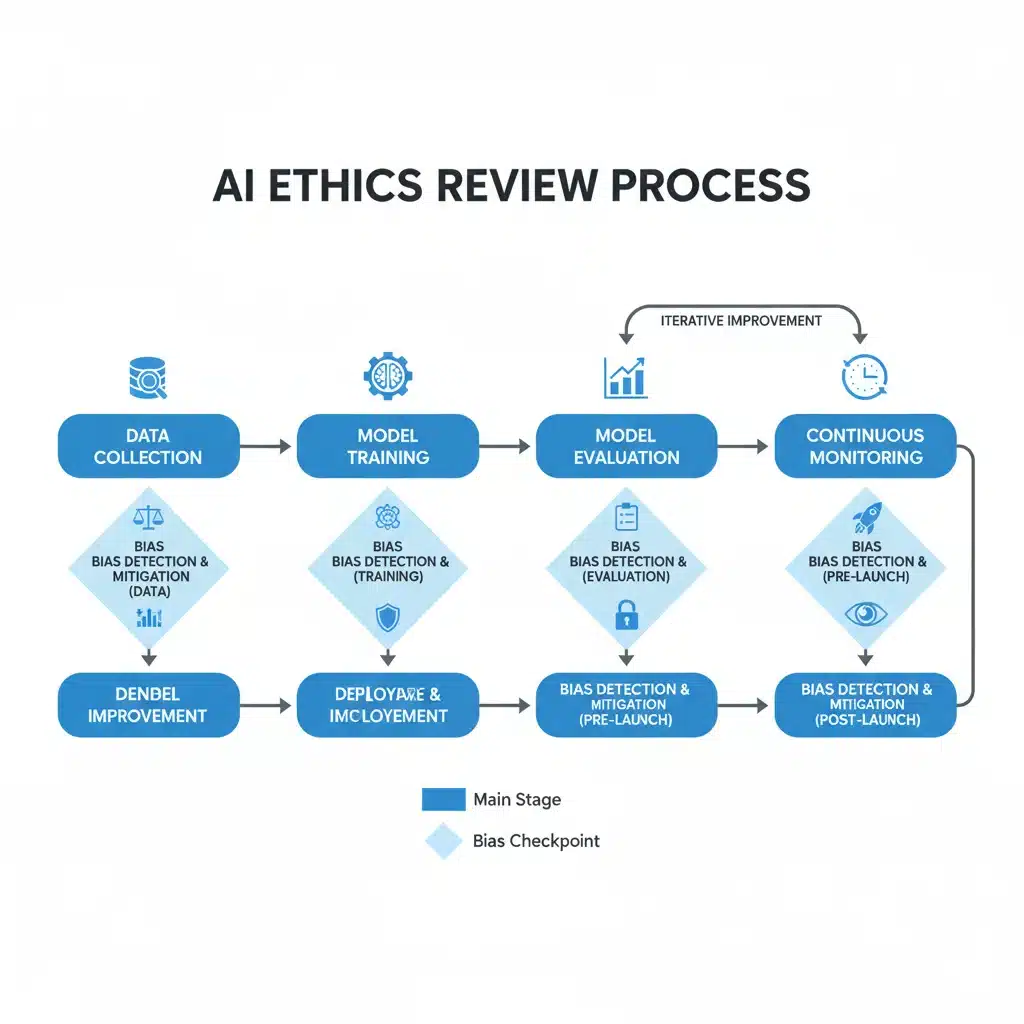

By Q4 2026, many US companies are projected to have implemented robust, multi-pronged strategies for AI Bias Mitigation US. These strategies encompass a range of technical, organizational, and cultural shifts:

1. Data-Centric Solutions: The Foundation of Fairness

Recognizing that data is the lifeblood of AI, a significant focus is on improving data quality and representativeness. Companies are:

- Auditing and Curating Training Data: Rigorous audits of existing datasets are being conducted to identify and rectify underrepresentation, overrepresentation, or embedded historical biases. This often involves demographic analysis of data points and a critical review of data collection methodologies.

- Synthetic Data Generation: To address data scarcity for minority groups or sensitive categories, companies are exploring the use of synthetic data generation techniques. This involves creating artificial data that mimics the statistical properties of real data but does not contain personally identifiable information, thereby helping to balance datasets without compromising privacy.

- Data Augmentation and Rebalancing: Techniques such as oversampling minority classes or undersampling majority classes are being employed to create more balanced datasets, preventing the AI from learning skewed patterns.

- Fairness-Aware Feature Engineering: Developers are being trained to critically evaluate features used in models, ensuring they are relevant and do not inadvertently encode biases. This involves careful consideration of proxy variables that might correlate with protected attributes.

2. Algorithmic and Model-Centric Interventions

Beyond data, companies are also refining the algorithms themselves to promote fairness:

- Fairness Metrics and Optimization: Integrating fairness metrics (e.g., demographic parity, equalized odds, predictive parity) directly into the model training process. This involves developing algorithms that not only optimize for accuracy but also for fairness across different demographic groups.

- Explainable AI (XAI) for Transparency: Investing in XAI techniques to make AI decisions more interpretable. By understanding why an AI model made a particular decision, developers can better identify and correct biased reasoning. Techniques like LIME (Local Interpretable Model-agnostic Explanations) and SHAP (SHapley Additive exPlanations) are gaining traction.

- Adversarial Debiasing: Employing adversarial learning techniques where one neural network attempts to identify and amplify bias, while another network tries to remove it, leading to more robust and fair models.

- Regularization Techniques: Applying various regularization methods during model training to prevent overfitting to biased patterns in the data.

3. Robust Testing and Validation Frameworks

Even with careful data and algorithm design, continuous testing is essential to ensure fairness:

- Bias Audits and Stress Testing: Regularly auditing AI models for bias using diverse test sets that specifically probe for discriminatory outcomes across different demographic groups. This includes stress-testing models with deliberately biased inputs to identify vulnerabilities.

- Red Teaming: Employing dedicated teams to intentionally try to break or find biases in AI systems, much like cybersecurity red teams. This proactive approach helps uncover hidden biases before deployment.

- A/B Testing and Shadow Mode Deployment: Deploying new AI models alongside existing ones (or in a ‘shadow mode’ where their decisions are not live) to compare performance and fairness metrics in real-world scenarios before full rollout.

- Post-Deployment Monitoring: Implementing continuous monitoring systems to detect emergent biases in live AI systems. As real-world data can evolve, biases can appear over time, necessitating ongoing vigilance.

4. Organizational and Governance Structures for Digital Ethics

Technical solutions alone are insufficient. US companies are building robust organizational frameworks to embed digital ethics into their corporate DNA:

- Establishing AI Ethics Boards and Committees: Many leading companies are forming interdisciplinary ethics boards comprising engineers, ethicists, legal experts, and social scientists to guide AI development and deployment.

- Developing Internal AI Ethics Guidelines and Principles: Crafting clear, actionable ethical guidelines that inform every stage of the AI lifecycle, from conception to retirement. These principles often emphasize fairness, transparency, accountability, and privacy.

- Training and Education Programs: Providing comprehensive training for all employees involved in AI development, deployment, and management on ethical AI principles, bias detection, and mitigation techniques. This fosters a culture of responsible AI.

- Dedicated Responsible AI Teams: Creating specialized teams focused solely on ensuring the ethical development and deployment of AI, working closely with product and engineering teams.

- Ethical Impact Assessments: Mandating ethical impact assessments (EIAs) for new AI projects, similar to privacy impact assessments, to proactively identify and address potential ethical risks, including bias.

5. Collaboration and Industry Standards

The challenge of AI bias is too complex for any single company to tackle in isolation. US companies are actively engaging in:

- Cross-Industry Collaborations: Participating in consortia and initiatives (e.g., Partnership on AI, AI Ethics Consortiums) to share best practices, research findings, and collectively develop industry standards for ethical AI.

- Academic Partnerships: Collaborating with universities and research institutions to advance the state of the art in AI ethics research, particularly in areas like bias detection, fairness algorithms, and explainability.

- Engagement with Policy Makers: Proactively engaging with government bodies and regulators to help shape sensible and effective policies around AI ethics and bias, ensuring that regulations are both protective and conducive to innovation.

- Open-Source Contributions: Contributing to open-source tools and frameworks that facilitate bias detection and mitigation, making these resources accessible to a wider community and accelerating progress.

Challenges and Roadblocks in AI Bias Mitigation US

Despite significant efforts, US companies face several formidable challenges in their quest for comprehensive AI Bias Mitigation US:

- Defining and Measuring Fairness: Fairness itself is a multifaceted concept with no single, universally accepted definition. What constitutes ‘fair’ in one context might be considered biased in another. Different fairness metrics can also be contradictory, making trade-offs inevitable and complex.

- The ‘Black Box’ Problem: As mentioned, the inherent complexity of many advanced AI models makes it incredibly difficult to trace the exact pathways leading to biased outcomes, hindering targeted interventions.

- Data Scarcity and Quality: While efforts are underway to improve data, obtaining truly representative and unbiased datasets, especially for rare events or marginalized groups, remains a significant hurdle.

- Evolving Societal Norms: What is considered acceptable or fair can change over time. AI systems, once deployed, need to adapt to these evolving societal norms, which requires continuous monitoring and updates.

- Resource Constraints: Implementing comprehensive AI ethics programs requires significant investment in specialized talent, tools, and infrastructure, which can be a barrier for smaller companies.

- Regulatory Uncertainty: The regulatory landscape for AI ethics is still nascent and evolving. Companies often operate in a grey area, making it challenging to anticipate future compliance requirements.

Projected Landscape by Q4 2026: A Vision for Ethical AI

By the fourth quarter of 2026, the landscape of AI Bias Mitigation US is expected to undergo substantial transformation. Here’s what we can anticipate:

- Standardization of Ethical AI Practices: While a universal standard might still be elusive, industry-specific best practices and ethical AI frameworks will become more standardized and widely adopted, particularly in high-stakes sectors like healthcare, finance, and criminal justice.

- Maturity of Responsible AI Tooling: The market for tools and platforms dedicated to AI ethics – including bias detection, explainability, and fairness optimization – will mature significantly, becoming more accessible and integrated into standard MLOps (Machine Learning Operations) pipelines.

- Increased Regulatory Scrutiny and Enforcement: Expect more concrete regulations and enforcement actions from US government bodies concerning AI bias. This will likely include requirements for AI impact assessments, transparency reports, and potentially algorithmic accountability audits.

- Competitive Differentiator: Companies with demonstrable commitments to ethical AI and effective bias mitigation will gain a significant competitive advantage. Trust will become a key differentiator, influencing consumer choice and business partnerships.

- Growth of AI Ethics as a Profession: The demand for AI ethicists, fairness engineers, and responsible AI product managers will surge, leading to the establishment of specialized academic programs and professional certifications.

- Greater Public Awareness and Demand: Public awareness of AI bias and demand for ethical AI will continue to grow, putting pressure on companies to be transparent and proactive in their mitigation efforts.

Case Studies and Industry Leaders in AI Bias Mitigation US

Several prominent US companies are already leading the charge in AI Bias Mitigation US, offering valuable insights into effective strategies. While specific details often remain proprietary, their public commitments and published research indicate a clear direction:

- Google: Known for its AI Principles, Google has invested heavily in research on fairness, interpretability, and privacy. They have developed tools like ‘What-If Tool’ to help developers understand model behavior and potential biases, and their Responsible AI teams are actively involved in product development.

- IBM: IBM has been a vocal proponent of explainable AI and has developed the ‘AI Fairness 360’ open-source toolkit, offering a comprehensive suite of metrics and algorithms to detect and mitigate bias in AI models. Their ‘AI Ethics Board’ guides their internal policies and external engagements.

- Microsoft: Microsoft has published extensive Responsible AI principles and frameworks, emphasizing fairness, reliability, and transparency. They offer tools like ‘Fairlearn’ for bias mitigation and have dedicated teams working on AI ethics across their product portfolio.

- Salesforce: With a focus on ethical use of CRM and AI, Salesforce has an Office of Ethical and Humane Use of AI. They provide guidelines and tools for their customers to ensure responsible deployment of AI solutions, particularly concerning data privacy and bias.

- Amazon: While facing past controversies related to AI bias in hiring, Amazon has since doubled down on its efforts to build more transparent and fair AI systems. They are investing in research and internal review processes to ensure their AI services are developed responsibly.

These examples illustrate a growing trend: AI Bias Mitigation US is no longer an afterthought but an integral part of the AI development lifecycle. These companies are not just responding to external pressures but are proactively shaping the future of ethical AI.

The Role of Education and Culture in Ethical AI Development

Beyond technical tools and governance structures, the bedrock of successful AI Bias Mitigation US lies in fostering a culture of ethical awareness and continuous learning within organizations. This involves:

- Interdisciplinary Collaboration: Encouraging data scientists, engineers, product managers, legal experts, ethicists, and even social scientists to work together from the very inception of an AI project. This diverse perspective helps identify potential biases and ethical dilemmas early on.

- Bias Awareness Training: Regular and mandatory training sessions for all employees involved in AI development and deployment. These sessions should cover the various types of bias, their potential impacts, and practical strategies for mitigation. This includes understanding cognitive biases that can inadvertently influence data collection or model design.

- Ethical Design Principles: Integrating ethical considerations into the design thinking process. This means asking critical questions about fairness, accountability, and transparency at every stage of product development, rather than treating ethics as a final checklist item.

- Whistleblower Protections and Feedback Mechanisms: Establishing clear channels for employees to raise ethical concerns about AI systems without fear of reprisal. This internal feedback loop is crucial for identifying and addressing issues that might be missed by formal audits.

- Cultivating a Responsible Innovation Mindset: Moving beyond merely avoiding harm to actively seeking to use AI for good. This involves considering the broader societal impact of AI systems and striving to build technologies that promote equity and justice.

By Q4 2026, it is anticipated that a significant number of US companies will have mature educational programs and a deeply ingrained culture that champions responsible AI development. This cultural shift is perhaps the most powerful long-term strategy for effective AI Bias Mitigation US, as it empowers every individual involved in the AI lifecycle to be an ethical steward.

Conclusion: A Future of Responsible and Equitable AI

The journey towards truly fair, transparent, and accountable AI systems is complex and ongoing. However, the proactive and accelerating efforts of US companies in addressing AI bias demonstrate a clear commitment to navigating these challenges. By Q4 2026, we expect to see a more mature ecosystem of AI Bias Mitigation US, characterized by standardized practices, advanced technical tooling, robust governance frameworks, and a deeply embedded culture of ethical AI development.

The implications of successful AI Bias Mitigation US are profound. It means AI systems that serve all segments of society equitably, fostering trust, reducing inequalities, and unlocking the full potential of this transformative technology for collective good. While vigilance and continuous effort will always be necessary, the current trajectory suggests a future where AI is not just intelligent, but also inherently just and ethical. This ongoing commitment to digital ethics will not only safeguard individuals but also strengthen the very fabric of our increasingly AI-driven society.